Data center migration to DDR5 can be more important than other upgrades. However, many people only vaguely believe that DDR5 is just a transition to fully replace DDR4. Processors are bound to change with the advent of DDR5 and have some new memory interfaces, as was the case with previous DRAM upgrades from SDRAM to DDR4.

But DDR5 is more than just an interface change. It changes the concept of a processor’s memory system. In fact, the changes in DDR5 may be enough to justify an upgrade to a compatible server platform.

Why choose a new memory interface?

Since the advent of computers, computer problems have become more complex, the inevitable growth has increased the number of servers, increased memory, and storage capacity, and increased CPU clock speed, and the number of cores in the form of evolution, but also promoted architectural change, including the Using decomposition and implementation of AI technology lately.

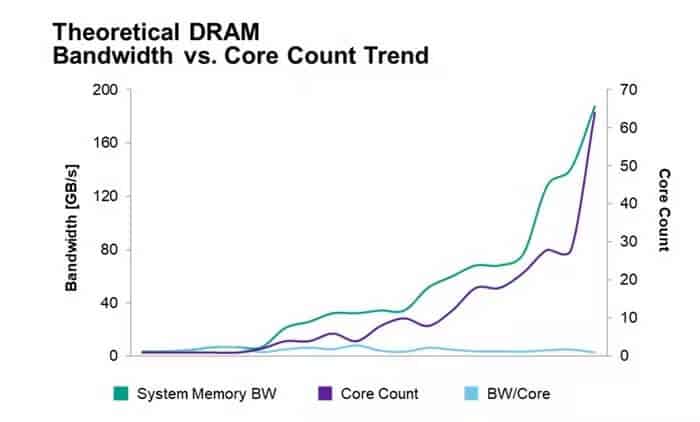

Some people might think everything is in sync because all the numbers are increasing. However, as the number of processor cores increases, DDR bandwidth is not preserved, so the bandwidth per core actually decreases. all the time.

As datasets have increased, especially for HPC, gaming, video encoding, machine learning inference, big data analytics, and databases, while memory transfer bandwidth can be improved by adding more memory channels to the CPU, for example, that consumes more Electricity. While the number of processor pins also limits the sustainability of this approach, the number of channels cannot be increased indefinitely.

Some applications, particularly high-kernel subsystems such as GPUs and dedicated AI processors, use some form of high-bandwidth memory (HBM). This technology runs data from stacked DRAM chips to processors via 1024-bit memory channels, which is a good solution for memory-intensive applications like AI. In these applications, the processor and memory need to be as close together as possible to enable fast transfers. However, it is also more expensive and the chip cannot be assembled in a removable/upgradeable device.

DDR5 memory, generally available this year, is designed to improve the channel bandwidth between processor and memory while supporting upgradeability.

Bandwidth and Latency

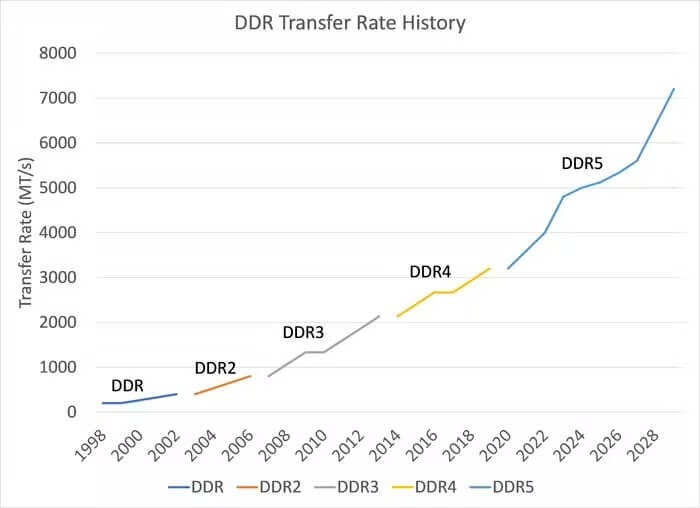

DDR5 offers a higher transfer rate than any previous DDR generation; In fact, DDR5 offers more than twice the rate compared to DDR4. DDR5 also introduces additional architectural changes such that these transfer rates work better than simple gains and improve observed data bus efficiency.

Also, the burst length is doubled from BL8 to BL16, giving each module two separate sub-channels, essentially doubling the number of channels available in the system. Not only do you get a higher transfer rate, but you also get a rebuilt memory channel that outperforms DDR4 even without a higher transfer rate.

Memory-intensive processes will get a big boost with the DDR5 transformation, and many of today’s data-intensive workloads, particularly AI databases, and online transaction processing (OLTP), fit that description.

Transfer speed is also important; Currently, DDR5 memory speeds range from 4,800 to 6,400 MT/s, with higher transfer speeds expected as the technology matures.

Power Consumption

DDR5 uses a lower voltage than DDR4, namely 1.1V instead of 1.2V. While an 8% difference doesn’t sound like much, the difference is obvious when squared to calculate the power consumption ratio, which is 1.1²/ 1.2²=85%, which equates to a 15% saving on electricity bills.

The architectural changes introduced by DDR5 optimize bandwidth efficiency and increase transfer rates; However, these numbers are difficult to quantify without measuring the precise application environment in which the technology is deployed. But again, end-users will feel that the energy per data bit improves due to the improved architecture and higher transmission speeds.

In addition, the DIMM can self-adjust the voltage, which reduces the power requirements of the motherboard and provides additional power savings.

For data centers, server power consumption and cooling costs are issues, and when these factors are considered, DDR5 as a more power-efficient module can definitely be a reason for an update.

ECC (Error Correction Code)

DDR5 also includes on-chip error correction, which many users are concerned about because it increases single-bit error rates and overall data integrity as DRAM processes continue to shrink.

For server applications, on-chip ECC corrects single-bit errors when reading commands before sending data from DDR5. This offloads some of the ECC load from the system correction algorithm to DRAM to reduce the load on the system.

DDR5 also introduces error checking and debugging where DRAM devices read internal data and write corrected data when enabled.

Conclusion

While DRAM interfaces aren’t typically the first thing data centers consider when rolling out upgrades, DDR5 is worth a closer look as the technology promises to significantly improve performance and save power.

DDR5 is a foundational technology that helps early adopters seamlessly migrate to the scalable and composable data centers of the future. Business and IT leaders must evaluate DDR5 and determine how and when to migrate from DDR4 to DDR5 to complete their data center transformation plans.

Recommended Reading: