SSDs have become the solution of choice for more and more businesses due to their high read and write performance and low latency. They are essential in databases, virtualization, application acceleration, big data, cloud computing, and even artificial intelligence. Enterprise SSDs are often required to operate in demanding environments with high concurrency, high stress, and 24/7 operation, and their reliability is a primary concern of enterprise users.

Reliability refers to the ability of a component or system to perform its intended function for a specified period of time under specified operating conditions. For enterprise SSD, this is a significant index, which not only directly determines product delivery performance, failure rate, and other basic indicators, but also plays a key role in protecting data availability and consistency.

▮ Reliability quantification index – MTBF

SSD “reliability” is typically measured by quantifying MTBF. MTBF denotes the Mean Time Between Failures, i.e. the ratio of the cumulative working time of the product in the full-service phase to the number of failures. Reflects product time quality, lower product failures, higher MTBF, and higher product reliability.

Compared to consumer SSD products, enterprise SSDs face greater reliability challenges. According to the OCP (Open Compute Project), the MTBF for enterprise SSDs deployed in data centers should be 2,000,000 hours, which is the current standard for enterprises. SSD. However, MTBF is something that needs to be verified by actual runtime testing and cannot be derived from anything. It is impossible to perform multiple validations of 2 million hours using traditional methods. So how do you get that MTBF of 2 million hours?

A: Based on a specific sample size, statistical extrapolation is performed by acceleration factor acceleration (such as write volume acceleration, and operating environment temperature acceleration) within a specified time period. The process simulates typical user scenarios, verifies theoretical values through real tests, and approves product quality in advance. A rigorous runtime check will directly determine whether MTBF’s “Reliability Index” is in fact reliable.

▮ Characterization Period of MTBF

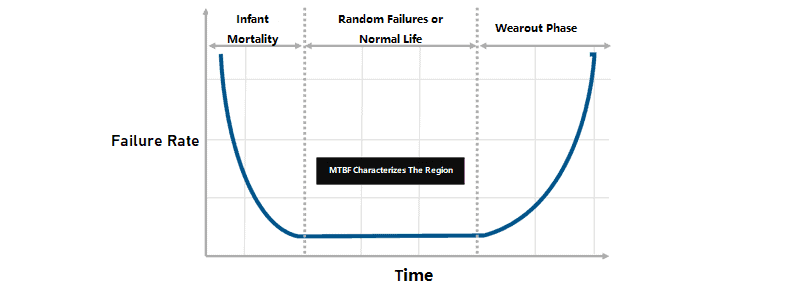

Like most electronic products, SSDs conform to the characteristics of the bathtub curve (fall rate curve), which is divided into three critical periods.

- Infant Mortality: High mortality due to performance and other factors occurs when the product is newly manufactured and loaded for use. To ensure that SSDs shipped to customers meet enterprise-class reliability standards, enterprise-class SSD manufacturers run all products on production lines for a specified period of time to maximize the risk of early failures and ensure customers don’t have problems with early failures.

- Random Failures or Normal Lifetime: This phase corresponds to officially shipped products with low and stable failure rates. The MTBF product reliability index describes this period, i.e. the stable use of the product stage.

- Wearout Phase: Due to wear and tear and aging of the product, the failure rate of the product increases exponentially over time. In this case, the SSD declares that its useful life has expired. Although the SSD can continue to be used, the number of bad blocks increases rapidly as the PE increases, and the effective reserved space (OP) of the SSD is gradually exhausted, increasing the failure rate of the device. For enterprise-class SSDS, it is not recommended to continue using products that are in a phase of wear.

▮ MTBF=MTTF?

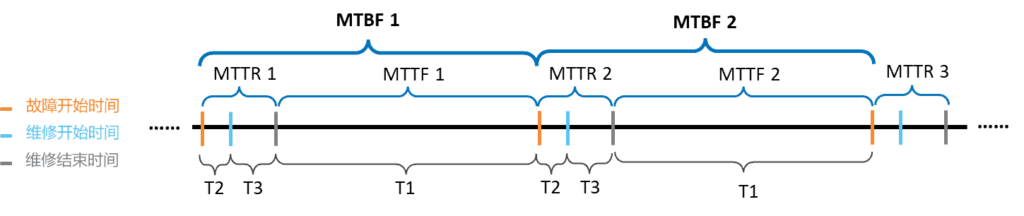

Besides MTBF, MTTF is another reliability descriptor you may have heard of. For a serviceable device, MTBF = MTTF + MTTR, and the relationship is as follows:

- Mean Time To Failure (MTTF): Indicates the mean time between two system failures. It is the average of all the periods of time between the start of normal system operation and the occurrence of a failure. MTTF = ∑ T1 / N

- Mean Time To Repair (MTTR): Indicates the average time between system failure and the end of maintenance. MTTR = ∑ (T2 + T3)/N

- Mean Time Between Failures (MTBF): indicates the average time between two system failures (including error maintenance) MTBF = ∑ (T2 + T3 + T1)/N

Because MTTR is usually much smaller than MTTF, MTBF is approximately equal to MTTF.

▮ In the formula for MTTF theory, where did the 2,000,000 hours come from?

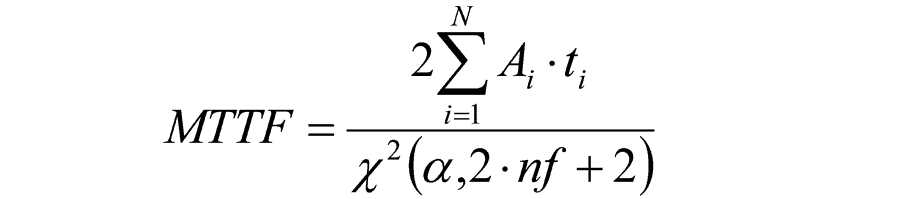

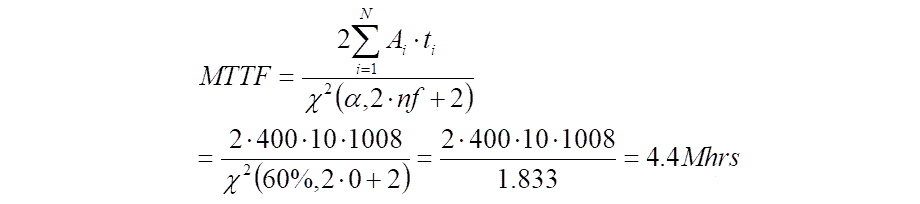

In the simplest case, the MTTF calculation follows the following formula:

- ai is the acceleration factor of SSD

- ti indicates the SSD test time

- nf indicates the number of faulty SSDS

- a is confidence limit (60%)

- X2 squared is a Chi-squared distribution

Acceleration factors in the above equation usually fall into three categories:

- Unaccelerated factor: A=1, usually used for firmware failures

- Total Bytes Written (TBW) acceleration factor: accelerates the lifetime of Written data

- Temperature acceleration factor: Increases the test environment temperature to accelerate fault occurrence

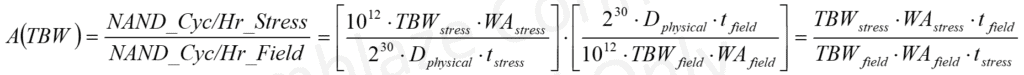

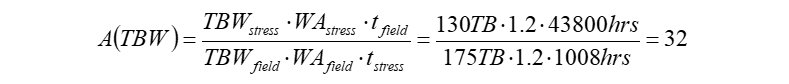

1. TBW acceleration factor

TBW is the lifetime of an SSD. Taking a PBlaze6 SSD as an example with an endurance of 1.5 DWPD and a user capacity of 3.84 TB, the total data write volume (array) of the PBlaze6 SSD in five years is 10.5 PB, equivalent to 5.76 TB data writing volume per day. If you increase the amount of data written per day (stress throttling), the lifespan of the SSD is consumed and failures occur more quickly. The TBW acceleration factor is calculated as follows:

Assuming a 100GB capacity SSD can be used for 5 years (43,800 hours) in a typical service scenario. The SSD lifespan is 175 TBW as defined in the product specifications. If 130TB of data is written in 1008 hours and the writing increase is 1.2, the TBW acceleration factor is 32. If more data is written in a short period of time, the TBW acceleration factor increases accordingly.

2. Temperature acceleration factor

Due to the inherent nature of NAND, data retention decreases as temperature increases. According to the Arrhenius equation, one year (8670 hours) of SSD at 40℃ room temperature is equivalent to 52 hours in an 85℃ aging chamber.

JESD 22-A108 defines the impact of temperature on SSDS over time. HTOL (High-Temperature Operating Life) tests can be performed to determine the reliability of SSDS operating at high temperatures for a long period of time. The protocol states that SSDs must be tested at a junction temperature pressure of 125°C unless otherwise specified. However, enterprise SSDs are usually equipped with high-temperature protection logic to prevent high temperatures from storing NAND data and damaging components. Therefore, the actual operating temperature of SSDS does not reach 125°C.

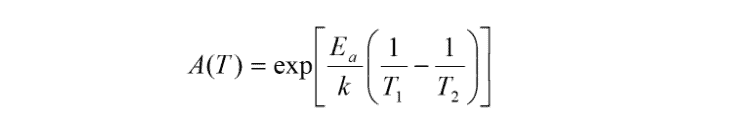

For the temperature acceleration factor, the calculation is as follows.

- Ea is the activation energy of the failure model, which is generally 0.7 eV

- k is Boltzmann’s constant, 8.617 x 10-5 eV/°K

- T₁ is the working temperature (the standard value is 55°C or 328°K).

- T₂ is the test acceleration temperature

3. Example of MTTF calculation

Assuming the sample size is 400, the test time is 1008 hours, the acceleration factor Ai = A(TBW) * A(T) is 10, the number of defects is 0, and the confidence is 60%, so MTTF = MTBF = 4,400,000 hours.

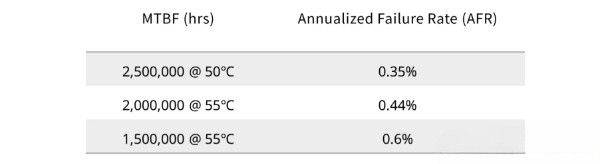

Note that MTBF is strictly temperature dependent. This is also mentioned in the OCP Datacenter NVMe® SSD specification:

- MTBF 2,500,000 hours (AFR≤0.35%), the corresponding SSD operating temperature ranges from 0 ° C to 50 ° C

- MTBF 2,000,000 hours (AFR≤0.44%), the SSD operating temperature ranges from 0 ° C to 55 ° C

But there is always a gap between theory and reality. Actually, the MTBF test is difficult to achieve in terms of the product’s 10x acceleration factor. The TBW acceleration factor can only be used to test the lifespan of NAND particles and the reliability of the hardware. The parts like the circuit and physical interface should be considered in the practical test. This part, on the other hand, can only be accelerated by temperature. In practice, MTBF = 2 million hours of testing, you need to use at least 2000 samples under the influence of the acceleration factor, and it works for more than 1000 hours.

▮ What is the relationship between MTBF and AFR?

In addition to the MTBF index, there are other quantitative reliability indicators such as failure rate (λ) and annualized failure rate (AFR) into which AFR and MTBF can be converted.

- Failure Rate λ: When choosing the key components of SSD, make sure the failure rate λ of each component can reach the standard. Compared to the failure rate index, the definition of MTBF is more direct and applicable to system-level reliability performance.

- AFR: Annualized failure rate, which provides a better understanding of the likelihood of a hard drive failure in any given year.

1. The MTBF and AFR transformation formulas are as follows:

- MTBFhours = 1/λhours

- MTBFyears = 1/(λhours ×24×365)

- AFR = 365×24hours×λhours = 8760hours/MTBFhours

The corresponding relationship between MTBF and AFR values is as follows:

Enterprise-class SSD reliability MTBF ≥ 2,000,000 hours (@55°C), which corresponds to an annualized failure rate AFR ≤ 0.44%, according to FFR (Functional Failure Requirement), the cumulative SSD failure over the entire lifetime range. Take the 5-year warranty for reference) ≤2.2%.

The entire series of DiskMFR Enterprise SSDs are standardized to 2,000,000 hours MTBF at 55°C/2,500,000 hours MTBF at 50°C to meet the requirements of stable 24×7 operation in a 24×7 environment.55 °C/50°C At least 3 months of data failure storage in a 40°C environment with an UBER rate of unrecoverable errors less than 1E-17.

Frequently Asked Questions (FAQs)

Q1: What is SSD reliability?

A1: SSD reliability refers to the ability of a solid-state drive (SSD) to perform consistently over time without suffering from data corruption, errors, or failures. Reliable SSDs are essential for storing important data and ensuring that it remains accessible and secure.

Q2: What is MTBF and how does it relate to SSD reliability?

A2: MTBF (mean time between failures) is a measure of the expected lifespan of a component or system before it fails. In the context of SSDs, MTBF is used to estimate the average amount of time that a driver can operate before experiencing a failure. However, MTBF is not always an accurate predictor of SSD reliability, as other factors such as workload and environmental conditions can also impact drive lifespan.

Q3: Are SSDs more reliable than HDDs?

SSDs are generally considered to be more reliable than hard disk drives (HDDs) due to their lack of moving parts, which reduces the risk of mechanical failures. Additionally, SSDs are less susceptible to physical damage and data loss due to shock, vibration, and temperature fluctuations.

Q4: What factors can impact SSD reliability?

A4: Several factors can impact SSD reliability, including workload intensity, temperature, power loss, firmware issues, and manufacturing defects. In addition, the type and quality of NAND flash memory used in an SSD can also impact its reliability and performance.

Q5: How can I improve the reliability of my SSD?

A5: There are several steps you can take to improve the reliability of your SSD, including monitoring drive health and performance, avoiding extreme temperatures and physical shocks, using a high-quality power supply, updating firmware and drivers regularly, and backing up important data regularly to a separate storage device. Additionally, using an SSD with high-quality NAND flash memory and a proven track record of reliability can help minimize the risk of data loss and drive failure.

References:

- JESD218, Solid-State Drive (SSD) Requirements, and Endurance Test Method

- JESD 22-A108, Temperature, Bias, and Operating Life

- OCP Datacenter NVMe® SSD Specification Version 2.0

- Calculating Reliability using FIT & MTTF: Arrhenius HTOL Model