This article explains how bad blocks are generated, how SSDs detect and manage bad blocks, what problems exist in the bad block management policy recommended by vendors, which management methods are better, how formatting hard disks does not cause bad block table loss, and what security risks are generated after SSD repair.

▶ Overview

Bad block management is related to SSD reliability and efficiency. The bad block management practices provided by Nand Flash manufacturers may not be very reasonable. In product design, if some abnormal conditions are not considered properly, some unexpected bad blocks will often occur.

For example, after testing many different master SSD found that new bad block due to abnormal power problem is very common, using the search engines search “abnormal power produce bad block” or similar words, will find that the problem exists not only in the testing process, the actual problems happened in the end-user is very much also.

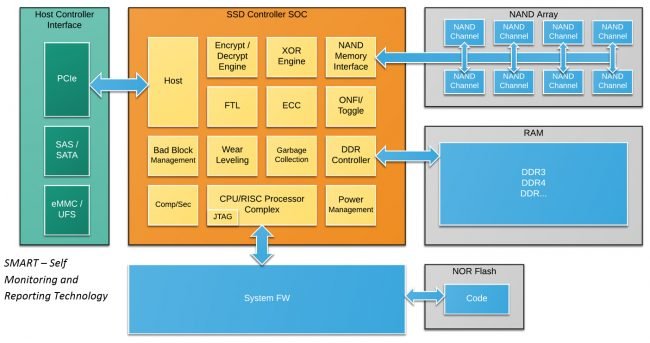

▶ Who manages the bad block?

For a controller without a dedicated flash file system, bad blocks can be managed by SSD controller firmware, or for a dedicated flash file system, bad blocks can be managed by a dedicated flash file system or Driver.

▶ Three types of Bad blocks

1, Factory bad block, or initial bad block, that is, the factory because it does not meet the manufacturer’s standards or the manufacturer’s random testing over the block can not meet the manufacturer’s published standards, in the factory has been identified by the manufacturer as a bad block; some factory bad block can be Erase, some can not be Erase.

2, The use of the process because of wear and tear caused by the addition of bad blocks or the use of bad blocks.

3, False bad blocks misjudged by the master for reasons such as abnormal power loss.

Not all new bad blocks are caused by wear. If the SSD does not have the abnormal power failure protection function, the main control may misjudge the bad blocks or generate new bad blocks. In the case of no abnormal power failure protection, if the Lower page has been successfully programmed and the Upper page is suddenly powered off, the data on the Lower page will be sent incorrectly. If the number of data errors exceeds the ERROR correction capability of SSD ECC, the data will be read incorrectly. Blocks are identified as Bad blocks by the master and added to the Bad Block table.

Some new bad blocks can be erased. After a new bad block is erased, data erasers may not fail again because the error depends on the pattern in which data is written. If a pattern fails, another pattern may fail.

▶ Percentage of factory bad blocks in the total Device

We have consulted several Nand Flash manufacturers about the ratio of factory bad blocks in the whole Device, and a common statement is given: The ex-factory bad block ratio is not more than 2%, and the manufacturer will leave a part of the allowance to ensure that even after reaching the maximum P/E times promised by the manufacturer, there is still a bad block ratio of no more than 2%. It seems that it is not easy to guarantee 2%. When receiving a new sample, the bad block ratio is more than 2%, and the actual test is 2.55%.

▶ The bad block determination method

① Judgment method of factory bad block

Bad block scanning basically checks whether the byte corresponding to the address specified by the manufacturer has the FFh flag. If the byte does not have FFh, the bad block is detected.

The location of the bad block identifier is roughly the same for each vendor, but different for SLC and MLC. Take Micron as an example:

1.1 For SLC with small pages (528Byte), whether the sixth Byte in the spare area of the first page of each Block has the FFh flag, and if not, it is a bad block. 1.2 For SLC with large pages (greater than or equal to 2112Byte), whether the first and sixth Byte of the spare area of the first page of each Block has the FFh flag, and if it does not, it is a bad block. 1.3 For MLC, factory bad blocks are scanned by scanning whether the first or second Byte of the spare area of the first page and last page of each Block has the 0xFF flag, and if it is 0xFF, it is good and fast, and if it is not 0xFF, it is a bad block.

To illustrate, borrow a diagram from Hynix Datasheet:

What data is in the bad block? All zeros or all ones? Of course, this may not be true. The factory bad block may be true, but not the new bad block. Otherwise, it is impossible to hide data through a “bad block” :

Can the factory bad blocks be erased?

Some are “allowed” to erase, while others are prohibited by the vendor. “Allowed” erasure only means that the bad block identifier can be changed by sending an erasure command, rather than suggesting that the bad block can be used.

Vendors strongly recommend that you do not erase bad blocks. Once the bad block flag is erased, it cannot be “recovered”. It is risky to write data on the bad block.

② In the process of using it, added the bad block determination method

A new bad Block is added to determine whether the operation on Nand Flash is successful based on the feedback result of the status register. When a Program or Erase is reported as FAIL, the SSD master will classify the Block as a bad Block.

2.1. An error when executing the erase command.

2.2. An error when executing the write command.

2.3、Error when executing read command; when executing read command, if the error number of bit exceeds the error correction capability of ECC, the Block will be judged as a bad block.

▶ Bad block management methods

Bad blocks are managed by creating and updating the Bad Block Table (BBT). Some engineers use one table to manage the factory bad block and the newly added bad block. Some engineers manage the two tables separately. Some engineers use the original bad block as a separate table and use the factory bad block and the newly added bad block as another table.

For the contents of the bad block table, the expression is not consistent, some of the expression is rather rough, for example, use 0 to indicate good fast, use 1 to indicate bad block, or vice versa. Some engineers use more detailed descriptions, such as 00 for factory bad blocks, 01 for bad blocks when Program fails, 10 for bad blocks when Read fails, and 11 for bad blocks when Erase fails.

Bad block tables are typically saved in separate areas (e.g. Block0, page0 and Block1, page1), each time the power directly reads BBT, efficiency is relatively high, considering the Nand Flash itself will also being damaged, which may lead to the loss of BBT, therefore, usually, will BBT backup processing, backup how many copies each is different, some backup 2 copies, also some backup 8 copies, In general, you can use the voting system of probability theory to do the calculation, but at least two.

Bad block management policies include bad block skipping policies and bad block replacement policies.

① Bad block skipping policy

- For the initial bad block, the bad block skip will skip the corresponding bad block through BBT and directly save the data to the next good block.

- Update the new bad block to BBT and transfer the valid data in the bad block to the next good block. Skip the bad block every time you perform Read, Program, or Erse.

② Bad block replacement strategy (recommended by one Nand Flash vendor)

Bad block replacement refers to using good blocks in the reserved area to replace bad blocks generated during use. If an error occurs on the NTH page in the program, then under the bad Block replacement policy, the data from page 0 to Page (n-1) will be copied to the same position in the reserved free Block (such as Block D). Then write the NTH page in the data register to Page N in Block D.

Vendors recommend that the entire data area be divided into two parts: the user-visible area for normal data operations, and the spare area for bad block replacement for storing the bad block replacement data and storing the bad block table. The spare area accounts for 2% of the total capacity.

When Bad Block has an FTL will Bad Block address remap to the reservation Block address, rather than just skip the Bad Block to the next Block, every time before the logical address writes operation, will first calculate what physical address can be written, which address is Bad Block, if it is a Bad Block, put the address to write data into the corresponding resources?

DiskMFR did not see suggestions on whether the 2% reserved area should be included in the OP area or additional areas, nor did he see a description of whether the 2% reserved area is dynamic or static. Adding the 2% reserved area is an independent area and a static area, so this approach will have the following disadvantages:

- Directly reserving 2% of the area for bad block replacement will reduce the available capacity and waste space. At the same time, due to the small number of available blocks, the average wear times of usable bad are accelerated;

- If the available area bad blocks exceed 2%, that is, all reserved areas are replaced, and the generated bad blocks cannot be processed, the SSD ends its life.

③ Bad Block Replacement Policy (some SSD vendors)

In fact, in real product design, it is rarely seen that 2% of the bad block is reserved as the bad block replacement area. Generally, free blocks in the OP (Over Provision) area are used to replace the bad block added during the use process. Take garbage collection for example. Erase the Block. If the Erase status register fails to Erase the Block, the bad Block management mechanism updates the address of the Block to the list of new bad blocks. Write the valid data pages in the bad Block to the Free Block of the OP area, update the bad Block management table, and skip the bad Block to the next available Block when writing data next time.

OP different vendors to the size of the set are different, different application scenarios, for different reliability requirements, OP size will be different, there is a trade-off relationship between OP and stability, the greater the OP, in the process of writing and garbage collection available space, the greater the performance is more stable, shows the performance curves of the smooth, on the contrary, the smaller the OP, performance stability is poorer, Of course, more space is available to the user, and more space means lower costs.

Generally speaking, OP can be set at 5%-50%, and 7% OP is a common proportion. Different from the 2% fixed Block suggested by the manufacturer, 7% OP is not fixed in some blocks, but dynamically distributed in all blocks, which is more conducive to the wear balance strategy.

④ SSD maintenance problems

For most SSD manufacturers that do not have the master control technology, if the product is repaired, the common practice is to replace the faulty device and mass produce it again. In this case, the list of newly added bad blocks will be lost. Losing the newly added bad block table means that the Nand Flash that has not been replaced already has bad blocks. The operating system or sensitive data may be written into the bad block area, which may cause the user’s operating system to crash. Even for a vendor with a controller, it depends on the vendor’s attitude toward the user to save the list of existing bad blocks.

⑤ Whether bad block generation affects the read/write speed and stability of SSDs

Factory bad blocks are independent of the bit line, so the erasability speed of other blocks is not affected. However, if enough new bad blocks are added to the SSD, the number of available blocks in the SSD decreases, which increases the number of garbage collection times. Meanwhile, the OP capacity decreases, which seriously affects the garbage collection efficiency. When bad blocks increase to a certain level, SSD performance deteriorates. In particular, the SSD performance deteriorates due to garbage collection.

▶ Conclusion

In conclusion, SSD bad block management plays a crucial role in ensuring the overall performance and reliability of solid-state drives. By implementing efficient algorithms and techniques, manufacturers are able to mitigate the negative effects of bad blocks and maximize the lifespan of SSDs.

Through the use of wear leveling, error correction codes, and proactive bad block management strategies, SSDs can maintain consistent performance and minimize the impact of data errors. These technologies enable the drive to distribute write and erase operations evenly across the NAND flash memory, reducing wear and preventing premature failure.

Additionally, the implementation of dynamic bad block management algorithms allows the SSD to identify and isolate faulty blocks, preventing them from affecting the performance of the entire drive. This proactive approach ensures that data integrity is maintained and that the SSD continues to operate optimally.

Furthermore, the impact of bad blocks on SSDs is not limited to individual drives. It can also affect RAID arrays and enterprise storage systems, leading to data loss and system downtime. By employing robust bad block management techniques, organizations can enhance the reliability and durability of their storage infrastructure, minimizing the risk of data loss and improving overall system performance.

Effective SSD bad block management is essential for maintaining the longevity and performance of solid-state drives. By implementing advanced algorithms and proactive strategies, manufacturers and organizations can mitigate the negative effects of bad blocks, ensuring data integrity, and optimizing the lifespan of SSDs.

▶ FAQs

Q1: What is SSD bad block management?

A1: SSD bad block management refers to the techniques and algorithms employed by solid-state drive manufacturers to handle and mitigate the impact of bad blocks. Bad blocks are sections of the NAND flash memory that become unreliable or inaccessible over time, potentially leading to data corruption or loss. Effective bad block management ensures that these blocks are identified, isolated, and the data stored in them are relocated to healthy blocks.

Q2: How does bad block management affect SSD performance?

A2: Bad block management has a significant impact on SSD performance. When bad blocks are present, read and write operations can be slower due to the need for error correction and data relocation. Additionally, if bad blocks are not managed properly, they can cause system crashes, and data loss, and reduce the overall lifespan of the SSD. Therefore, efficient bad block management techniques help maintain consistent performance and enhance the reliability of SSDs.

Q3: What are the key techniques used in SSD bad block management?

A3: SSD bad block management utilizes several key techniques, including wear leveling, error correction codes (ECC), and proactive bad block management algorithms. Wear leveling distributes write and erase operations evenly across the NAND flash memory, preventing certain blocks from wearing out faster than others. ECC helps detect and correct errors that may occur during data transfer or storage. Proactive bad block management algorithms identify and isolate bad blocks, preventing them from affecting the performance of the entire SSD.

Q4: Can bad block management prevent data loss?

A4: Yes, effective bad block management can help prevent data loss. By identifying and isolating bad blocks, SSDs can relocate the data stored in those blocks to healthy blocks. This proactive approach ensures that the integrity of the data is maintained and that potential data loss due to bad blocks is minimized. However, it’s always recommended to regularly back up important data to an external storage device or cloud storage to further safeguard against data loss.

Q5: Does bad block management only apply to individual SSDs?

A5: No, bad block management is not limited to individual SSDs. It can also have an impact on RAID arrays and enterprise storage systems. In these scenarios, efficient bad block management becomes even more critical as the failure of a single SSD can affect the entire array or system. By implementing robust bad block management techniques, organizations can enhance the reliability and durability of their storage infrastructure, minimizing the risk of data loss and improving overall system performance.